OpenAI cybersecurity model rolls out to defend digital frontiers

A new era of digital warfare begins

The landscape of the internet has changed forever. As we move deeper into 2026, the threats facing our personal data, national infrastructure, and global financial systems have become more sophisticated than ever. Hackers are using advanced artificial intelligence to find holes in security systems faster than humans can patch them. However, the good guys just got a massive upgrade. The official OpenAI cybersecurity model, known as GPT-5.5-Cyber, has finally arrived.

This launch is not just another software update. It is a strategic move designed to tip the scales back in favor of those who protect our digital world. For years, AI companies were cautious about giving too much power to security tools for fear that they could be misused. But as the frequency of cyber-attacks grows, the consensus has shifted. We need powerful AI to fight powerful AI. The OpenAI cybersecurity model is the answer to that urgent need, providing elite teams with the reasoning and coding capabilities required to stay one step ahead of the bad actors.

Why the OpenAI cybersecurity model is a game changer

The release of GPT-5.5-Cyber is being hailed as a landmark moment in the tech industry. This specific version of the model has been fine-tuned to understand the complex language of software vulnerabilities. Unlike the standard versions of ChatGPT that most people use for writing emails or generating images, this OpenAI cybersecurity model is built for the trenches of digital combat. It can analyze millions of lines of code in seconds, identifying hidden bugs that could lead to a massive data breach.

One of the most impressive features of this model is its ability to perform what experts call “vulnerability triage.” In the past, when a security team found a potential problem, they had to spend hours or even days determining if it was a real threat or just a false alarm. The OpenAI cybersecurity model can now automate this process, allowing defenders to focus their energy on the most critical issues. This speed is essential when dealing with “zero-day” exploits, which are flaws that hackers discover before the software creators even know they exist.

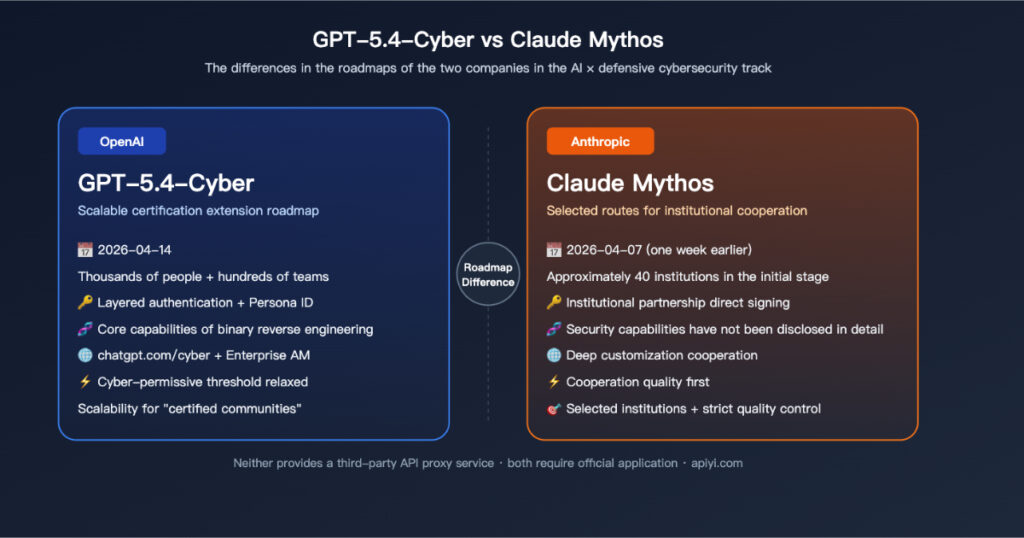

Competing with the shadow of Anthropic Mythos

The timing of this release is no coincidence. Just one month ago, the AI world was shaken by the debut of Anthropic’s Mythos model. That model was described by its creators as being so powerful and potentially dangerous that it could not be released to the general public. It sparked a wave of concern among world leaders and security experts who worried about what would happen if such a tool fell into the wrong hands.

OpenAI has taken a slightly different approach with its OpenAI cybersecurity model. While they are also keeping the most powerful features restricted, they are moving quickly to get the tools into the hands of verified “defenders.” By launching the Trusted Access for Cyber (TAC) program, OpenAI is ensuring that thousands of vetted professionals can use GPT-5.5-Cyber to secure the world’s most important software. This creates a competitive environment where the two biggest names in AI are racing to prove who can offer the best protection.

Inside the Trusted Access for Cyber program

The “Trusted Access for Cyber” program is the gatekeeper for this new technology. OpenAI has made it clear that not just anyone can sign up to use the OpenAI cybersecurity model. To gain access, organizations must go through a rigorous vetting process. This includes verifying their identity, their professional track record, and their intended use for the AI. This “know-your-customer” approach is designed to prevent the model from being used to create new types of malware or to launch attacks.

Once a team is accepted into the program, they gain access to a more “permissive” version of the AI. This means the model is allowed to discuss and analyze hacking techniques that would normally be blocked by the standard safety filters. This is a bold move, but a necessary one. You cannot defend against an attack if your AI is not allowed to understand how that attack works. The OpenAI cybersecurity model acts as a highly intelligent consultant that can simulate how a hacker might try to break into a system, allowing the defenders to reinforce those weak points before an actual strike occurs.

Real world applications of GPT-5.5-Cyber

What does this look like in practice? Imagine a major bank that processes billions of transactions every day. Their software is incredibly complex and constantly being updated. With the OpenAI cybersecurity model, their security team can run continuous scans of their entire codebase. If a new update accidentally introduces a security flaw, the AI can flag it immediately. It can even suggest the exact code needed to fix the problem, saving the bank’s engineers countless hours of work.

Beyond the corporate world, this technology is being used to protect critical infrastructure. Power grids, water treatment plants, and transportation systems are all running on software that is often decades old. These systems are highly vulnerable to digital sabotage. By applying the OpenAI cybersecurity model to these legacy systems, governments can identify and patch vulnerabilities that have existed for years. This is not just about protecting data; it is about protecting the physical safety of millions of people.

The debate over AI safety and transparency

As with any major advancement in AI, the release of the OpenAI cybersecurity model has reignited the debate over safety. Some critics argue that even with strict vetting, there is a risk that the technology could be leaked or reverse-engineered by malicious actors. They point to the “arms race” nature of the industry as a sign that we might be moving too fast. If one company releases a powerful tool, others will feel pressured to release even more powerful ones to keep up.

OpenAI has responded to these concerns by promising a high level of transparency with government regulators. They are sharing “system cards” that explain exactly how the model was trained and what its limitations are. They are also committing millions of dollars in API credits to non-profit organizations and academic researchers who are studying AI safety. The goal is to ensure that while the OpenAI cybersecurity model is a powerful weapon for defense, it is also being developed with a deep sense of responsibility toward the future of humanity.

How small businesses can benefit from the shift

While the most advanced version of the OpenAI cybersecurity model is restricted to elite teams, the benefits are expected to trickle down to everyone. As these models learn more about how to protect software, the lessons they learn are being integrated into the basic security features of everyday products. This means that even if you are a small business owner who does not have a dedicated security team, the platforms you use will become inherently more secure because they were built using AI-assisted tools.

We are already seeing this happen with popular web development platforms and cloud storage providers. They are using the OpenAI cybersecurity model to audit their own systems, making it much harder for hackers to target their users. This is the “rising tide” effect of AI-powered security. By making the foundations of the internet stronger, we are making the entire digital ecosystem safer for every single person who goes online.