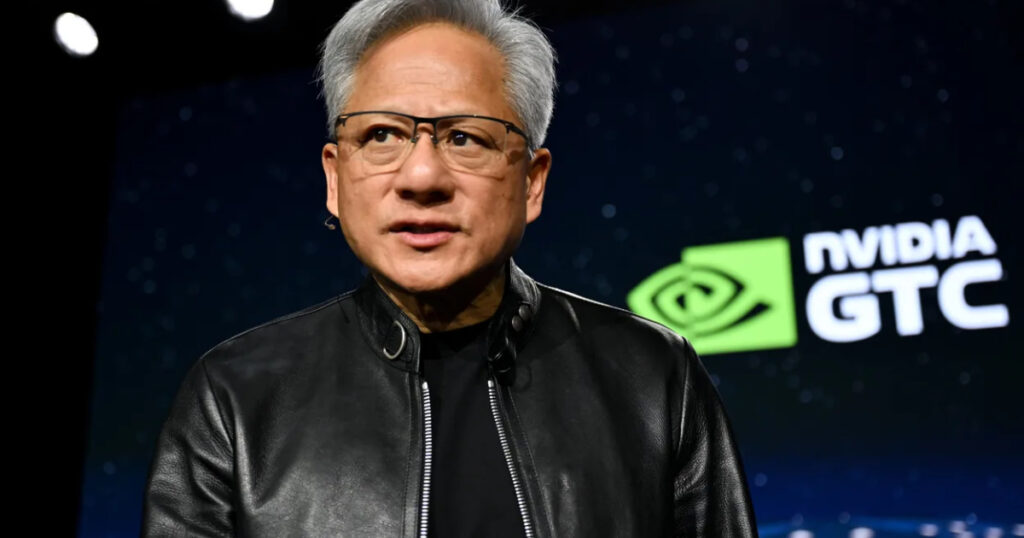

Jensen Huang Says ‘We’ve Achieved AGI’: This is what he said and what it potentially signals in 2026

Nvidia CEO Jensen Huang told podcaster Lex Fridman on Monday, March 23, 2026: “I think we’ve achieved AGI.” Within hours, the clip was circulating on every tech platform. By Tuesday morning it was trending in India. And like almost every major AI headline in the past two years, it is simultaneously more interesting and less definitive than the headline makes it sound.

The statement is worth unpacking in full, because what Huang actually said, the context he said it in, and the hedges he immediately added, tell a different story from the four-word quote that is doing the rounds.

What Jensen Huang Actually Said: The Full Context

Huang was asked a specific question by Fridman. The question was not “has AGI been achieved?” The question was: what is the timeline for an AI system capable of starting, growing, and running a successful technology company worth more than one billion dollars?

To that specific, narrow question, Huang answered: “I think it’s now. I think we’ve achieved AGI.”

He then immediately began walking that back. He noted that Fridman had said a billion-dollar company and had not specified for how long. He added that it was not out of the question that an AI agent could create a web service, a simple app that a few billion people used briefly for a small fee, and then it went out of business again shortly after.

He then said directly: “The odds of 100,000 of those agents building Nvidia is zero percent.”

This is the complete version of what he said. Huang agreed that AI can, right now, in principle, create a short-lived billion-dollar business. He explicitly denied that AI could build a company with the complexity, depth, sustained strategic decision-making, and institutional knowledge of Nvidia. The headline and the full statement are not the same thing.

“The odds of 100,000 of those agents building Nvidia is zero percent.” – Jensen Huang, same interview, two minutes after the AGI quote

Why AGI Is So Hard to Define: What Different Leaders Are Saying

The reason this debate keeps recurring is that AGI has no agreed definition. Every major tech figure is working from a different version of the term, and that means every declaration about AGI is also a declaration about which definition the speaker prefers.

| Who Said It | What They Mean by AGI |

| Jensen Huang (Nvidia) | AI that can autonomously create a billion-dollar company, even temporarily. By this definition: achieved. |

| Sam Altman (OpenAI) | Said in February 2026 OpenAI has ‘basically built AGI, or very close to it’ but called it a ‘spiritual’ statement, not literal. Added AGI needs ‘a lot of medium-sized breakthroughs’ still. |

| Demis Hassabis (Google DeepMind) | AI with human-level capabilities including continual learning and long-term planning. Current models lack these. Timeline: five to eight years, with major breakthroughs required. |

| OpenAI (contractual) | Contractual AGI clause with Microsoft defines AGI as a system capable of generating at least 100 billion USD in profit. By this definition: not achieved. |

| Elon Musk (xAI) | Has told employees AGI could emerge within two years, possibly this year. Definition not formally specified. |

| Most AI researchers | A system capable of performing any intellectual task a human can do, across all domains, with transfer learning and generalisation. Consensus: not yet achieved. |

Why Huang’s Statement Is Different from Past CEO Claims

In recent months, the trend among AI company leaders has been to move away from the term AGI and replace it with more specific, less loaded language. Google prefers ‘highly capable AI systems.’ Anthropic talks about AI that can do ‘most economically valuable cognitive work.’ OpenAI’s own public communications have shifted toward describing capabilities rather than declaring milestones.

Huang’s statement is a deliberate break from that trend. Nvidia controls roughly 80% of the AI chip market. When the person whose hardware trains nearly every major AI model makes a declaration about AGI, the industry cannot simply ignore it or file it under ‘one person’s opinion.’ It forces every other company to either agree, disagree, or explain their own position.

That is exactly what happened within 24 hours of the podcast going live. The statement landed in a context where the definition is contested, the person making the claim is the most powerful supplier in the AI industry, and the timing lands one week before the end of Nvidia’s Q1 2026 earnings quarter.

When the man whose chips train nearly every major AI model says AGI has arrived, the industry cannot ignore it. Even if the statement is deliberately narrow.

The Real-World Agent Data Huang Was Pointing To

Huang’s statement was not made in a vacuum. He referenced OpenClaw, an open-source AI agent platform, and its viral adoption. The broader data on autonomous AI agents in 2026 supports the direction of his argument, even if it does not support the AGI label.

- Cursor: Over one-third of merged pull requests on the coding platform are now generated by autonomous AI agents with minimal human review.

- Bajaj Finance (India): AI voice agents now account for 10% of the company’s loan disbursements, handling the full customer interaction autonomously.

- Manus: The autonomous agent platform crossed Rs. 830 crore ARR (USD 100 million) in eight months and was subsequently acquired by Meta.

- OpenClaw / NemoClaw: Nvidia developed NemoClaw in collaboration with Peter Steinberger, the creator of OpenClaw. Steinberger has since joined OpenAI, linking both platforms to the broader AI agent infrastructure.

These are not demonstrations. These are production deployments generating real revenue. The pattern Huang is describing, AI systems executing economically meaningful work with minimal human supervision, is happening. Whether that constitutes intelligence, general or otherwise, is the part that remains genuinely open.

Why the Definition Matters Beyond Philosophy

The question of what counts as AGI is not just an intellectual exercise. It has direct legal and financial consequences.

The OpenAI-Microsoft contract

OpenAI’s partnership agreement with Microsoft includes a clause that terminates certain obligations if OpenAI achieves AGI. OpenAI’s contractual definition of AGI sets the threshold at a system capable of generating at least USD 100 billion in profit. No current AI system meets that threshold. But the fact that the clause exists means that every CEO who declares AGI has been achieved is, intentionally or not, making a statement with potential legal implications for major commercial agreements across the industry.

Investment and regulatory positioning

Billions of dollars in AI investment have been justified by the premise that AGI is an achievable future milestone. If AGI is now declared to have arrived, the question of what that investment is building toward changes. Regulators in India, the EU, and the US are also watching these declarations closely, because AGI is a threshold that triggers different risk assessments and potential governance frameworks in several ongoing policy discussions.

What it means for Indian AI users and companies

For Indian businesses and users of AI tools, the practical question is simpler: can AI agents reliably handle complex, high-stakes business tasks without supervision? The Bajaj Finance deployment is a real Indian example of autonomous agents working at scale. But the gap between a voice agent handling loan disbursements and a system capable of making the strategic decisions that built Bajaj Finance is the same gap Huang acknowledged when he said the odds of agents building Nvidia are zero percent.

The Honest Read on What Huang Said

Huang used a specific, narrow definition of AGI, agreed that AI meets that definition right now, then explicitly excluded the harder version of AGI from his claim. His statement is defensible under his own definition. It is not defensible under the definitions used by most researchers, regulators, or by OpenAI’s own contractual language.

What makes his statement worth paying attention to is not the AGI label. It is the underlying trend he is pointing at. Autonomous AI agents are moving from experiment to production infrastructure faster than most people outside the industry appreciate. The Cursor and Bajaj Finance and Manus data points are real. The question of whether that constitutes general intelligence is a philosophical one. The question of whether it constitutes a genuine shift in what AI can do commercially is not.

The AGI label is the distraction. The underlying shift in what autonomous agents can do commercially is the story worth watching.

Frequently Asked Questions

Did Jensen Huang say Nvidia has built AGI?

No. Huang said he believes AGI has been achieved in response to a specific question about whether AI could autonomously create and run a billion-dollar technology company. He was responding to Lex Fridman’s definition of AGI, not making a general claim. He immediately added that the odds of AI agents building a company with Nvidia’s complexity and longevity are zero percent.

What is AGI?

AGI stands for Artificial General Intelligence. It most commonly refers to an AI system that can perform any intellectual task a human can do, across all domains, with the ability to learn, transfer knowledge, and generalise to new situations. There is no agreed technical definition, which is why different leaders use the term to mean different things. The most demanding definitions include sustained long-term planning, continual learning, and strategic decision-making.

Has AGI been achieved?

Under most researcher definitions and under OpenAI’s own contractual definition, no. Under Huang’s narrow definition, which equates AGI with the ability to autonomously create a short-lived billion-dollar business, he argues yes. Sam Altman called OpenAI’s position ‘basically AGI or very close’ but described it as a spiritual statement rather than a technical one. Demis Hassabis of Google DeepMind puts meaningful AGI at five to eight years away with major breakthroughs still required.

What did Jensen Huang say about AI agents?

Huang cited the viral success of OpenClaw and the broader trend of AI agents being used to build and run digital services with minimal human input. He said he would not be surprised if an AI agent created a popular app that briefly attracted billions of users at a low price per use. He also described this as different from sustained, complex enterprise-level intelligence, which he said AI cannot replicate.

Why does the AGI definition matter?

The definition matters legally because OpenAI’s partnership agreement with Microsoft terminates certain obligations when AGI is achieved, with AGI defined in that contract as a system generating USD 100 billion in profit. It matters for investment because billions of dollars have been raised with AGI as a stated goal. It matters for regulation because AGI is a threshold that triggers different risk assessments in several active policy discussions in India, the EU, and the US.

[…] is powerful, yet many users find themselves limited by a crucial missing link: context. If you use standard AI assistants, they lack direct access to your local environment. They cannot “see” your project […]