What Is Anthropic? The AI Safety Company That Took On the Pentagon and Won Over the World

Anthropic started with seven people and a disagreement. Today it is one of the most valuable companies on earth, its AI is used in hospitals, banks, and military bases, and it just refused a direct order from the US Pentagon. This is the story of how that happened.

What Is Anthropic and Why Does It Matter?

Anthropic is an American artificial intelligence company based in San Francisco. It builds and researches large language models, which are AI systems that can read, write, answer questions, write code, and hold conversations. Its main product is an AI assistant called Claude.

What makes Anthropic different from most AI companies is its stated reason for existing. The company describes itself as an AI safety company. That means its main goal is not just to build powerful AI, but to make sure that AI is safe and useful for people. The two founders, Dario Amodei and Daniela Amodei, believe that AI could be one of the most important technologies in human history, and that getting it right matters more than getting it first.

As of February 2026, Anthropic is valued at $380 billion. That makes it one of the most valuable private companies in the world. Its AI assistant Claude is now the most downloaded AI app in more than 20 countries, having recently passed both ChatGPT and Google Gemini in Apple’s App Store.

How Anthropic Was Founded: The Story Behind the Split from OpenAI

In 2021, a group of seven researchers left OpenAI, one of the most well-known AI companies in the world. They had concerns about the direction the company was taking. Some felt that safety was not being treated as seriously as it should be as the push for more powerful AI systems accelerated.

The group was led by Dario Amodei, who had been OpenAI’s Vice President of Research, and his sister Daniela Amodei. Together with five other colleagues, they started Anthropic with a clear mission: to study AI safety and build AI systems that are reliable, honest, and unlikely to cause harm.

The name Anthropic comes from the word anthropic, which relates to human existence and our place in the universe. It is a nod to the company’s belief that humanity and AI need to coexist carefully.

In the summer of 2022, the team finished training its first AI model, which they also called Claude. They decided not to release it to the public right away. They were worried that releasing a powerful AI without enough testing could start a race between companies to launch faster and faster, with less and less regard for safety. That caution set the tone for how the company would operate going forward.

What Is Constitutional AI? Anthropic’s Approach to Safe AI Explained

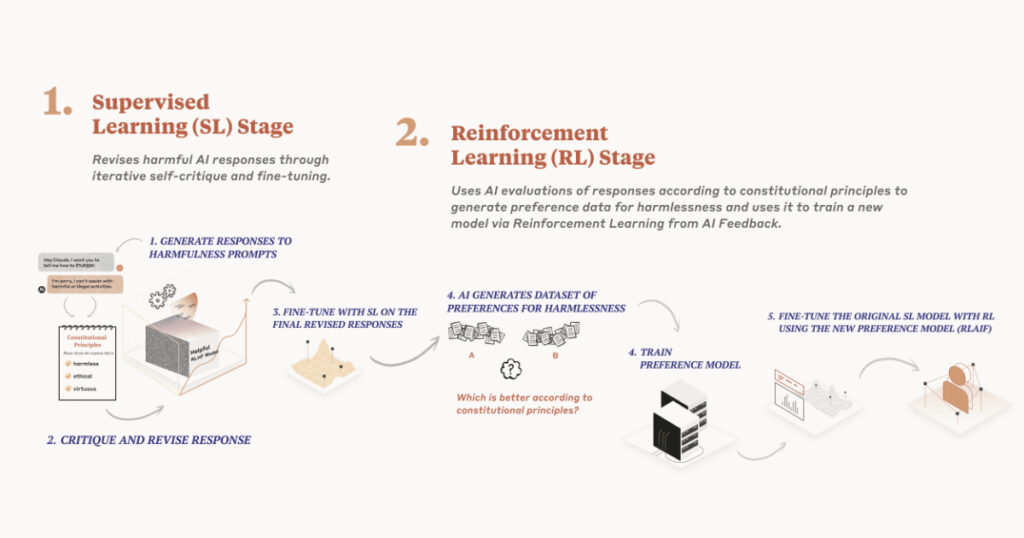

The most important idea that Anthropic brought to the AI world is something called Constitutional AI, often shortened to CAI. It is the method the company uses to train Claude, and it is different from how most other AI companies train their models.

Here is a simple way to understand it. Imagine you are training a new employee. One approach is to watch everything they do and correct them each time they make a mistake. This takes a lot of time and human effort, and you cannot catch every mistake. Another approach is to give them a clear set of values and principles upfront, and then ask them to check their own work against those principles before they hand it in. That second approach is closer to what Constitutional AI does.

Anthropic writes a set of rules called a constitution. These rules draw on sources like the Universal Declaration of Human Rights. Claude is then trained to review its own responses and ask itself whether they follow the rules. If they do not, it adjusts. Over time, this makes the model better at being helpful, honest, and safe without needing a human to check every single response.

This approach matters for a practical reason. As AI systems get more capable, it becomes impossible for humans to review every output manually. Constitutional AI is designed to scale. It allows safety to grow alongside capability, rather than falling behind it.

The Responsible Scaling Policy: How Anthropic Rates Its Own AI’s Danger Level

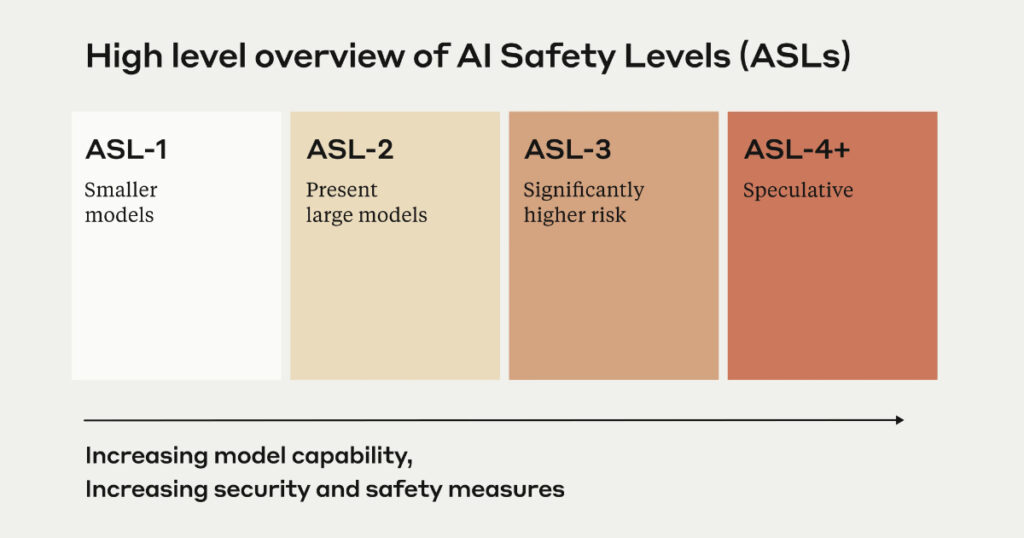

Alongside Constitutional AI, Anthropic developed something called the Responsible Scaling Policy, or RSP. Think of it like a safety rating system for AI, similar to the biosafety levels that scientists use when working with dangerous viruses.

The RSP has five levels, called AI Safety Levels or ASL levels. Here is what they mean in plain terms:

ASL-1: No real danger. This covers things like a chess-playing program or a 2018-era language model. It cannot do anything that a determined person could not already do on their own.

ASL-2: Early warning signs. Current Claude models sit here. They show some early signs of being able to help with harmful things, but not much more than a basic internet search would provide.

ASL-3: Meaningful risk. Claude Opus 4 was placed in this category. A model at this level could provide real, useful help to someone trying to cause serious harm, such as building a dangerous weapon.

ASL-4 and ASL-5: Extreme risk. The rules for these levels have not been fully written yet because the research needed to handle them safely does not yet exist.

The point of the RSP is that Anthropic commits to not deploying a model at a higher ASL level until it has safety measures in place that match that level of risk. It is a self-imposed rule, but it is one the company publishes publicly so it can be held accountable.

Claude vs ChatGPT: How Does Anthropic’s AI Compare?

The most common question people have when they first hear about Anthropic is simple: how is Claude different from ChatGPT?

Both are AI assistants that can write, answer questions, help with research, and write code. But there are some meaningful differences between them.

Claude is widely known for being careful. It tends to be more thoughtful about giving answers to sensitive questions. It is also known for having a large context window, which means it can read and remember much longer documents in a single conversation than many other AI models.

Claude is also ad-free. Anthropic announced in February 2026 that Claude would never show ads or sponsored content. This came in direct contrast to ChatGPT, which introduced ads to its free tier around the same time. Anthropic’s position is that an AI assistant you are having a private conversation with should not be trying to sell you things.

In terms of who uses each product, Claude has become especially popular with businesses. It is widely used in healthcare, finance, legal work, and software development, where getting the right answer matters more than getting a fast one. ChatGPT has a larger general consumer base but Claude has been gaining ground quickly, particularly among enterprise customers.

Claude passed ChatGPT and Google Gemini to become the top AI app in more than 20 countries in early March 2026, partly due to a surge of people downloading it in support of Anthropic’s stance against the US Pentagon.

Anthropic’s Funding and $380 Billion Valuation: What the Numbers Mean

Anthropic has raised more money faster than almost any company in history. Here is a simple timeline of its growth:

When it launched in 2021, it was worth roughly $4 billion. By early 2023, that figure had grown to about $4.1 billion after its first major funding rounds. Then the growth accelerated sharply.

By late 2025, after a $13 billion Series F round, it was valued at $183 billion. In February 2026, it raised $30 billion in a Series G round, pushing its valuation to $380 billion. That made it the second-largest private funding round in tech history, behind only OpenAI’s $40 billion raise.

The investors behind Anthropic include some of the biggest names in global finance and sovereign wealth. The Series G was co-led by Singapore’s GIC and included D.E. Shaw Ventures, Founders Fund, Accel, General Catalyst, Jane Street, and the Qatar Investment Authority. That kind of international backing reflects a view that advanced AI is not just a technology product. It is a strategic national asset.

Amazon has been Anthropic’s most committed partner, investing over $8 billion and making Claude available through Amazon Web Services. Google has also invested roughly $2 billion and signed a deal giving Anthropic access to up to one million of Google’s custom AI chips, with more than one gigawatt of computing power coming online in 2026. This gives Anthropic the computing infrastructure it needs to train its largest models.

As of early 2026, Anthropic is generating roughly $14 billion in annualized revenue. It is not yet profitable, because training cutting-edge AI models costs enormous amounts of money. But the company is growing fast and its investors believe the long-term opportunity is large enough to justify the spending.

The Pentagon Standoff: What It Tells Us About Anthropic’s Values

In early 2026, Anthropic found itself in a very public confrontation with the US government that revealed a lot about who the company is.

The US Department of Defense held a $200 million contract with Anthropic and wanted full, unrestricted access to Claude for military purposes. Anthropic said it would cooperate on many things, but it drew two lines it would not cross. It would not allow Claude to be used for fully autonomous weapons systems. It would also not allow Claude to be used for mass surveillance of American citizens.

The Pentagon’s response was severe. Officials threatened to invoke the Defense Production Act and ultimately declared Anthropic a supply chain risk, a designation that had never before been applied to an American company. The move could force government contractors to stop using Claude.

Anthropic refused to back down. CEO Dario Amodei publicly stated the company’s position and said it would sue if the designation was applied unfairly. Meanwhile, something unexpected happened. The public sided with Anthropic. More than one million people signed up for Claude every single day during that week, the highest growth rate the company had ever seen.

The episode showed something important about how Anthropic thinks. Even under enormous commercial and political pressure, the company held to the ethical lines it had set. That consistency is rare, and it is part of why the company has built such strong trust with both users and enterprise customers in regulated industries.

What Anthropic Is Building Next

Anthropic has not stood still since the Pentagon dispute. The company continues to release new versions of Claude and expand what the model can do.

Claude Code, its AI tool for software developers, moved from a research preview to a full product in 2025. It integrates with popular coding environments like VS Code and JetBrains, and supports GitHub Actions, which means it can help developers write, review, and fix code directly in their existing workflows.

In May 2025, Anthropic launched a web search tool through its API, allowing Claude to look up information in real time rather than relying only on what it was trained on. This makes Claude significantly more useful for tasks that require up-to-date knowledge.

Anthropic also launched a division called Labs in January 2026, led by Mike Krieger, one of the co-founders of Instagram. Labs is focused on exploring new product ideas at the frontier of what AI can do.

In October 2025, Anthropic signed a cloud deal with Google that gives it access to over one million of Google’s Tensor Processing Units. This is some of the most powerful AI computing hardware available. The deal gives Anthropic the resources to train the next generation of Claude models.

The company has also been expanding into education. It partnered with Iceland’s Ministry of Education in 2025 to give teachers access to Claude for classroom use. It sees education as one of the most important areas where safe and well-designed AI can have a positive impact.

Anthropic is still a private company. It has not filed for a public stock market listing, though analysts expect that could happen in late 2026 if market conditions allow. In the meantime, the best way to experience what the company has built is simply to try Claude at claude.ai, which is free to use and, true to the company’s word, shows no ads.

[…] Instagram Messages, also called Instagram DMs or Instagram Direct, are the private messaging feature inside the Instagram app. Unlike public posts, Stories, or comments that anyone can see, messages are only visible to you and the people you send them to. […]